A study on the underestimated role of messenger services in Europe

by United Europe’s Young Professional Advisors Oliver Behr and Karl Luis Neumann.

The amount, the reach and the speed of misinformation, conspiracy theories or Fake News that disseminates in the digital world appears to have increased in the last years. What does that mean for us as individuals and our societies? A critical, yet often overlooked aspect in this context are digital private communication channels, such as WhatsApp or iMessage. On these channels, misinformation can spread more effectively than on any other communication channels.

Generally, there are various ways in which information gets distributed. Historically, TV programmes, radio stations or newspapers have been prominent information channels. All of them have elaborated information verification mechanisms in place. Boards of editors and governance rules help them to stop misinformation and unwanted content from being published. Even digital channels such as Twitter and Facebook timelines possess some (possibly not sufficient) inherent verification mechanisms – users can flag and report content, and recently the platform providers themselves started to fact-check user content.

Digital messaging services where users communicate directly and privately, such as WhatsApp or iMessage, however, do not have such information verification mechanisms at all. You can basically text whatever you want to your friends. With just a few clicks, people forward Fake News and conspiracy theories, which subsequently spread like a wildfire throughout WhatsApp groups. In recent elections, as examples from Brazil and India show, misinformation spread via WhatsApp has played a vital role.

So far, little has been done by regulators to meet the threat that emerges from private messaging platforms. Any legislation of that kind, naturally, is most effective on a supranational level. Information travels borders almost instantly, rendering national regulation obsolete. Consequently, approaching this topic thoroughly can be a major chance for the European Union. Similar to the EU’s GDPR privacy regulations, Europe can again take a leading role in defining the basic rules and standards of the digital world – for the benefit of all. The following article aims at raising awareness of the underlying systemic dynamics of the topic and its implications. It is high time for European policymakers to act and initiate a discussion about the spread of misinformation on private messaging platforms!

The study was part of the 2020 Map the System Challenge of the University of Oxford where it was considered one of the 32 best projects, out of 3,500+ submissions.

Complexity nourishes extremism

Long before the coronavirus started to spread in Europe, an Italian friend of ours received an audio message with exaggerated and inaccurate facts about the virus in one of his private WhatsApp group chats. As it turned out, the original message was intended as a joke, initiated by a student in a private WhatsApp group. But just within a few days, this particular Fake News message travelled across numerous WhatsApp group chats, until it reached our friend, influencing thousands of people on its way.

Our world has become uncertain. Events like the coronavirus pandemic are confronting politicians and decision-makers with questions to which they have no simple answers. Yet, especially in times of crisis, people demand guidance and clear information. This conflict provides the grounds for Fake News and conspiracy theories like the WhatsApp message in our example. In recent years, voices that promote such misinformation have appeared to become louder, more radical and more prominent in public discourse. Misinformation infects discussions and draws them away from a fact-based, rational exchange. Essentially, Fake News dismantles the core of open public exchange, putting liberal democracies at risk.

Fake News and misleading information is barely a new phenomenon. People with extreme views always had an audience. For centuries, these people found their audience offline – basically on the streets – during gatherings and public speeches. While this has not changed until today, extreme views additionally circulate in the digital sphere. So, the increased prominence of Fake News appears to be related to the emergence of digital communication channels. It seems evident that social media has given extremists a platform that makes their voices hearable. But why are digital channels so much more prone to misinformation and extreme content?

Information dissemination before the internet

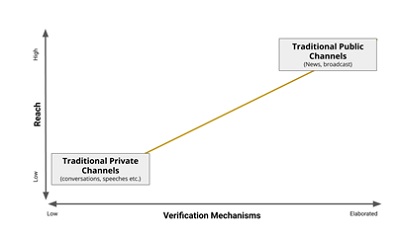

Generally speaking, people who want to send information to an audience can do this via so-called communication channels. Here, we distinguish two types of channels: private and public channels. To understand how these channels influence which kind of information we receive, it is helpful to plot them against the dimensions reach – how many people receive the message – and information verification mechanisms. Information verification mechanisms describe practices and rules to check the trustworthiness of information that is spread through a channel.

Before the internet (we call this the “old” world), public channels comprised newspapers, TV channels and radio stations. We describe them as public communication channels because the information they share is publicly accessible, and collectively reaches large parts of society. Their wide reach is somewhat justified by boards of editors and governance rules that equipped these channels with sophisticated verification mechanisms ensuring the accuracy of the information before it is published. In summary, these channels maintain a high reach and elaborated verification mechanisms. In our reach-verification mechanisms diagram, we plot these channels at the top right corner.

Next to public channels, information always spreads in more private settings like bars or club meetings. But here, only a few people hear the message. And, except for direct opposition from the audience, there are no verification mechanisms. Low reach, low verification mechanisms. So, let’s plot these channels at the left bottom of the diagram.

If we draw a line between both channels in our diagram, in the old world, there seemed to be a relative balance between the reach of a channel, and its embedded information verification mechanisms. The higher the reach of a message, the higher the verification.

Digital communication channels tip the system

Now, let’s see what happened with the emergence of digital communication channels.

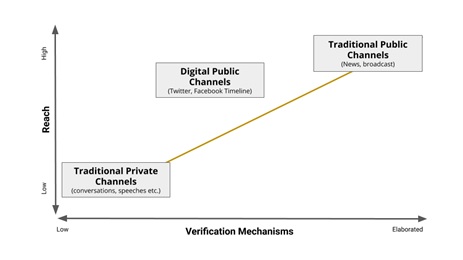

Similarly to the old world, we find a public sphere. Facebook or Twitter are publicly accessible platforms, where viral messages can reach many people.

When it comes to information verification mechanisms, we can see that platform users can flag or rate content, which is an inherent verification mechanism. Moreover, social media platforms recently have started to fact-check the content of users that have an extraordinarily high reach. However, these mechanisms are relatively weak compared to, for example, a board of editors that checks information before it is published. It is fair to say that newspapers have more sophisticated verification mechanisms than social media platforms. So, let’s put these digital public channels between traditional private and traditional public channels.

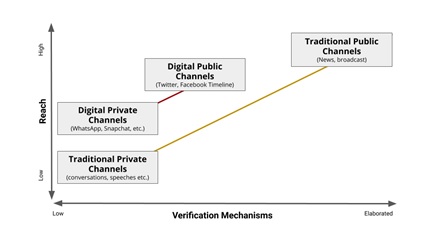

In recent years, digital private messaging services like WhatsApp, iMessage and Facebook Messenger became extremely popular. These messaging services are a private setting in the digital sphere where verification mechanisms are practically absent – You can text whatever you want to your friends on WhatsApp. Essentially, these private messaging services have equally few verification mechanisms like traditional private channels. Information that is transmitted in a conversation in a pub or via a private WhatsApp chat is not checked for accuracy, appropriateness and contextualisation. But now comes the twist: unlike in a private conversation at a bar, by forwarding messages onto multiple chats or even group chats on WhatsApp, it is possible to reach many people very quickly. Consequently, these channels have a higher reach than traditional private channels while information verification remains low. In our diagram, they are above the traditional private channels.

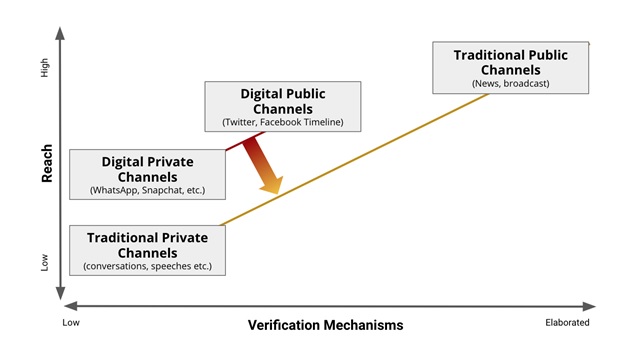

Now, the underlying challenge becomes visible. Compared to traditional channels, the digital ones, and especially private channels such as WhatsApp, do not have appropriate information verification mechanisms in relation to their reach. Misinformation can easily spread on digital private channels and reach a significant number of people, without any mechanism that verifies the trustworthiness of the information.

If we look at the red and yellow line in our diagram, we see the essence of the problem: the mismatch of reach and verification mechanisms. This mismatch is increasingly addressed for digital public channels like Facebook and Twitter. These platform providers have started to enhance their verification mechanisms by implementing fact-checking as external and internal pressure increased. Some of Donald Trump’s Tweets are now flagged on Twitter if they contain inaccurate or misleading information. Digital private channels, however, by and large, have escaped the discussion about Fake News and misinformation. We urgently need to change that since social media plays a vital role in public discourse. Examples of Fake News that spread via private digital channels from the Brazilian election, the Coronavirus pandemic or conspiracy theory groups are numerous.

Rebalancing digital communication channels

Our model visualises an effective way to prevent the spread of misinformation: We need to bring the digital channels towards the yellow line by limiting their reach or by increasing their verification mechanisms.

Let us look at the first option: “increasing verification mechanisms”. An inherent problem of the digital channels – in contrast to traditional public channels – is the fact that content currently is not checked before it is published. The most popular verification mechanism to identify Fake News on digital public communication channels (e.g. Facebook) is “notice and takedown” which implies that content gets published in the first place and is then taken down once flagged by users or internal fact-checking teams. Thereby, content potentially reaches an audience even though it might be deleted afterwards due to violations against the platform guidelines. In the context of digital private communication channels (e.g. WhatsApp), the approach of notice and takedown is particularly weak. There are two reasons for that.

Firstly, members of group chats are usually closer connected on a personal level. This increases the trust in messages that are shared within these groups, and at the same time reduces the willingness to “accuse” other group members of spreading Fake News. Who would report their own mother to WhatsApp for spreading inaccurate information? Hence, in these channels, it is less likely that Fake News is identified by users in the first place. There is also no streamlined flagging procedures in channels like WhatsApp. It is simply unclear, if and how users can report critical messages.

More fundamentally, it remains unclear what extent of inaccuracy should lead to actions from users or platform providers. Misinformation and most forms of Fake News are not illegal. So it remains unclear, what kind of content should actually be decreased in reach. Finally, what happens to messages that have been identified to violate guidelines and/or are illegal?

Increasing verification mechanisms always aims at limiting freedom of speech. Spreading inaccurate or wrong information is not illegal. This should not change – the consequences would be disastrous to our liberal societies. Consequently, in order to prevent the spread of Fake News in digital private channels, “notice and takedown” content verification mechanisms are particularly ineffective. The second option, limiting the reach of information that gets shared, might be a more workable solution. Here, three immediate measures could be a starting point:

Digital social distancing

A first solution would be to reduce the size of group chats. The outcomes seem simple but effective. The potential damage from misinformation and Fake News would be limited as fewer people can be reached with one message. Additionally, imposing user verification mechanisms for groups that exceed a certain number of participants is imaginable.

Highlighting/Stopping repetitive forwarded messages

The concrete example of the Coronavirus voice-message underlines the potential damage information can do if it is forwarded on private channels. Recently, WhatsApp limited message forwarding to no more than five group chats at a time while messages that are forwarded more than ten times are specifically labelled. Certainly, this is a step into the right direction. However, full transparency with regards to forwarded messages is still unachieved. In order for users to identify repetitively forwarded messages, it would be helpful to display the number of times such a message was forwarded. Providing this information would empower users to critically examine messages with a high reach. Although indirect, this would add some user-driven verification to high-reach-messages. Message recipients will be more alerted, and hopefully question the nature of the message.

Accountability of platform operators

The digital communication landscape is shaped by few large companies that combine a substantial number of users. These corporation should take over responsibility for what happens in their digital spaces. Firstly, private messaging provides (e.g. WhatsApp/Facebook) should provide guidance and assistance with regards to identify illegal Fake News. For example, these companies could establish helpdesks that operate as first point of contact for users. In practice, users could forward questionable messages to these helpdesks via chat, so that they can clarify the message’s truthfulness. In addition, platform providers should be legally obliged to disclose their efforts against misinformation and Fake News in an annual report.

While these three options might cure some superficial symptoms, the prominence of Fake News and misinformation roots far deeper. We should look behind the surface to find ways how we can rebalance our communication channels. Here are some proposals for how this could work.

Digital literacy

Beneath the spread of all Fake News and misinformation lies society’s failure to teach information literacy: how to think critically about the information that confronts us in our modern digital age. Instead, we have prioritised speed over accuracy, sharing over reading, commenting over understanding. In short, we’ve stopped to think critically about information, leaving many adrift in the digital wilderness increasingly without an internal compass to help them find their way to certainty.

The solution is to teach everyone the basics of information literacy, especially in the digital context. This would limit the spread of inaccurate information, because of our sole awareness. People who are critical recipients do not forward thoughtlessly.

Political action

There are many approaches to confront Fake News, but who and how to decide which approaches are most suitable? We strongly believe that our communication systems require global political action that sets clear rules for digital platform operators, users and society as a whole. However, any legislation that aims at controlling misinformation and Fake News, aims at limiting freedom of speech at the same time. Policymakers, however, must discuss this trade-off – on a global level. Whereas autocratic regimes have found a way to control digital communication channels, liberal democracies are struggling to find appropriate answers. While it is a good sign that fundamental rights are not eagerly given up, it is time to act.

We have the chance to use our learnings from recent years to redefine the interplay of different communication channels – traditional and digital ones. This is a chance to establish an integrated system that combines traditional media and digital platforms in a system that can instantly react to inaccurate information. When we manage to perceive digital and traditional channels not as competitors, but as complements, we might all benefit from positive feedback loops for all channels. However, the journey to such a functioning system is long and requires clear political guidance and boundaries!

After having successfully introduced the GDPR privacy laws, the European Union could stand together and take the next step in this discussion about digital platforms. We strongly encourage European Leaders to take the chance to transform our communication systems, for good!